Instagram to start parent alerts for teen suicide, self-harm searches

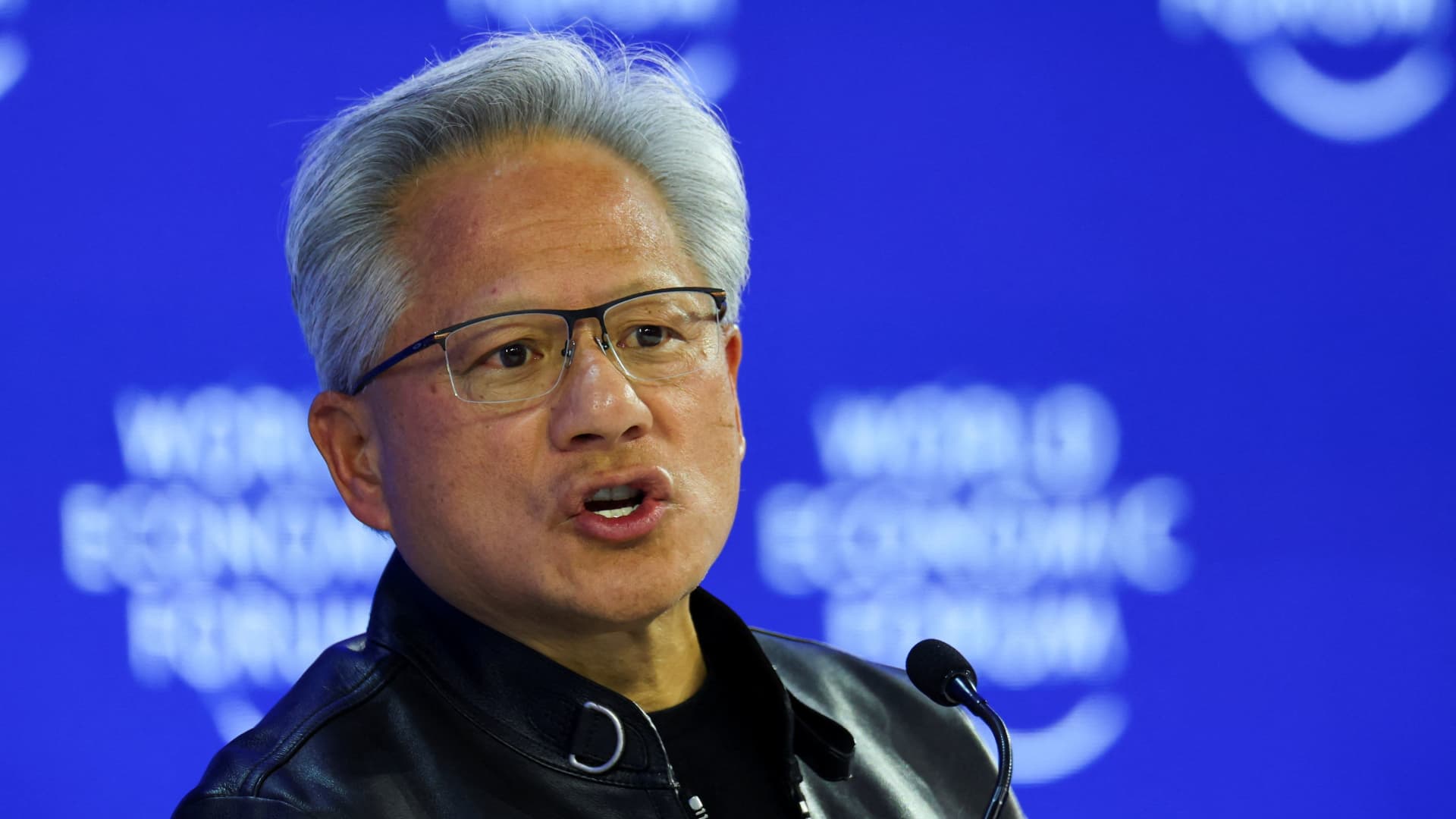

Mark Zuckerberg, chief executive officer of Meta Platforms Inc., exits Los Angeles Superior Court in Los Angeles, California, US, on Wednesday, Feb. 18, 2026.

Kyle Grillot | Bloomberg | Getty Images

Instagram said Thursday that it will alert parents when teens repeatedly search for suicide and self-harm terms as parent company Meta comes under scrutiny across multiple trials.

“These alerts are designed to make sure parents are aware if their teen is repeatedly trying to search for this content, and to give them the resources they need to support their teen,” the company said in a release.

The parental supervision feature comes as the social media company faces allegations that the design and functionality of apps like Instagram foster detrimental effects on the mental health of young users.

Experts have described the trials and related legal cases involving companies like Google’s YouTube, TikTok and Snap as the social media industry’s “big tobacco” moment as the courts weigh the alleged harm of their products and their supposed efforts to mislead the public about those adverse effects.

The Instagram alerts will begin rolling out next week in the U.S., U.K., Australia and Canada.

Parents will receive the alerts if their teenagers are repeatedly searching during a “short period of time” for “phrases promoting suicide or self-harm, phrases that suggest a teen wants to harm themselves, and terms like ‘suicide’ or ‘self-harm,'” the company said in a blog post.

The company called it “the right starting point” as it tries to find the right threshold for what constitutes sending an alert. Meta said parents may receive alerts that might not indicate a real cause for concern, but it would continue to listen to feedback on the feature.

The alerts will be delivered to parents via email, text, WhatsApp or within Instagram.

The alerts feature requires that both parents and teenagers enroll in Instagram’s parental supervision tools.

Parents who receive the alerts will see a message explaining their teen’s concerning Instagram search habits and they will have an option to view additional resources for help, the company said.

Meta said that it plans to eventually release similar parental alerts “for certain AI experiences” that are intended to notify guardians “if a teen attempts to engage in certain types of conversations related to suicide or self-harm with our AI.”

Those upcoming AI-related parental alerts come after rising concerns that AI chatbots from various tech companies like OpenAI and Meta engage in questionable and potentially harmful mental-health-related conversations with users.

Meta offers its own AI chatbots and is working on a new powerful AI model codenamed Avocado that’s set to debut later this year, CNBC reported.

Meta CEO Mark Zuckerberg testified last week in Los Angeles Superior Court as part of a trial in which a plaintiff alleges that she became addicted to social media apps like Instagram when she was underage.

During his testimony, Zuckerberg reiterated Meta’s position that mobile operating system and related app store owners like Apple and Google are better suited to verify the ages of users as opposed to app makers.

Regarding age verification, the Federal Trade Commission said Wednesday that it won’t enforce actions related to the Children’s Online Privacy Protection Rule, or COPPA Rule, against “certain website and online service operators” that collect user data that can be used to inform age-verification technologies.

The FTC said the policy statement is part of a larger review of the COPPA Rule as it pertains to age verification.

Legal filings released last week as part of a separate Meta-related trial in New Mexico detail internal messages from employees discussing how the company’s encryption efforts could make it more difficult to disclose child sexual abuse material reports to authorities.

Meta has denied the allegations in both the California and New Mexico cases.

Last week, CNBC reported that the National Parent Teacher Association is not renewing its funding relationship with Meta due to the various legal challenges facing the company regarding digital safety for children.

If you are having suicidal thoughts or are in distress, contact the Suicide & Crisis Lifeline at 988 for support and assistance from a trained counselor.

WATCH: Worst outcome in Meta LA trial are structural changes to the company’s apps.

<