Meta rolls out in-house AI chips weeks after massive Nvidia, AMD deals

Meta’s 5-gigawatt Hyperion data center under construction in Richland Parish, Louisiana, Jan. 9, 2026.

Courtesy of Meta

Meta on Wednesday revealed four custom, in-house chips tailored for artificial intelligence-related tasks as part of the company’s massive data center expansion plans.

The specialized silicon is part of the Meta Training and Inference Accelerator, or MTIA, family of chips, which it publicly revealed for the first time in 2023 before unveiling a second-generation version in 2024.

Meta Vice President of Engineering Yee Jiun Song told CNBC that by designing custom chips, which are then manufactured by Taiwan Semiconductor, the social media giant can squeeze more price per performance across its data center fleet rather than relying on only vendors.

“This also provides us with, with more diversity in terms of silicon supply, and insulates us from price changes to some extent,” Song said. “This is a little bit more leverage.”

The first new chip, MTIA 300, was deployed a few weeks ago and is intended to help train smaller AI models that underpin Meta’s core ranking and recommendation tasks, Song said. These kinds of tasks include showing people relevant content and online ads within the company’s family of apps like Facebook and Instagram.

The upcoming chips — MTIA 400, MTIA 450 and MTIA 500 — are intended for more cutting-edge generative AI-related inference tasks like creating images and videos based on people’s written prompts. The chips will not be used for training giant large language models, Song said.

One Meta data center rack will include 72 of Meta’s in-house MTIA 400 chips, optimized to accelerate AI inference. MTIA 400 has completed the testing phase and is expected to be deployed in Meta data centers soon.

Courtesy: Meta

Meta said in a blog post that it had finished testing the MTIA 400 and is “on the path to deploying it in our data centers,” while the other two chips will be operational in 2027.

“It’s unusual for any silicon company or team to be releasing a new chip every six months. It’s a very quick cadence,” Song said. “And the big reason for this is that we find ourselves building out capacity so quickly at the moment, and spending so much on CapEX, that at any given time we want to have the state-of-the-art chip to deploy.”

Song said the company expects the chips to have a “standard five-plus years of useful lifetime.”

Meta’s AI spending spree includes a gigantic data center in Louisiana and two others in Ohio and Indiana. Meta is also reportedly looking to lease space at the Stargate site in Texas after OpenAI and Oracle scrapped plans to expand the AI data center site, according to Bloomberg.

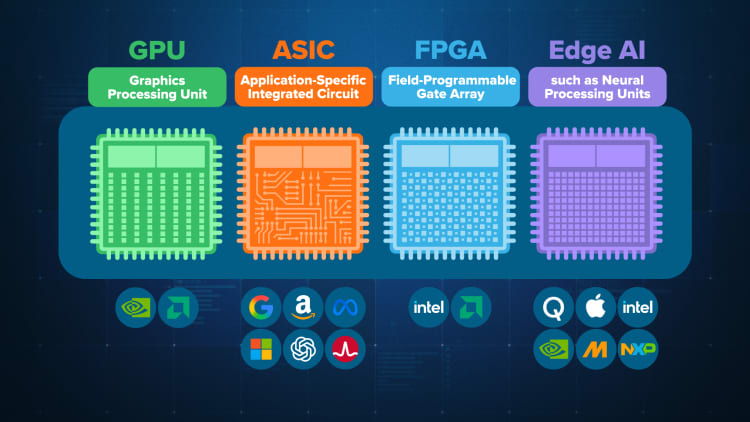

Tech giants like Google have been developing their own in-house silicon to fill data centers in recent years, as they seek an alternative to the costly, supply-constrained GPUs from Nvidia and AMD.

These hyperscalers have been creating so-called application-specific integrated circuits, or ASICs, that are smaller and cheaper than the general-purpose AI workhorse GPUs, but are limited to performing a narrower set of tasks.

Google was first to the ASIC game, releasing its first Tensor Processing Unit in 2015. Amazon was next, with its first custom chip announced in 2018. While these tech giants incorporate their AI chips as part of their respective cloud computing platforms so customers can access them, Meta’s MTIA chips are used entirely for internal purposes.

Meta’s next-generation MTIA 400 custom accelerator has completed the testing phase and is expected to be deployed in Meta data centers soon.

Courtesy: Meta

The upcoming MTIA chips will contain more high-bandwidth memory, or HBM, to help power GenAI-related inference tasks.

The tech industry’s mega AI push has led to a shortage of memory chips in the broader market, which means that Meta’s ambitious silicon roadmap could face future supply chain constraints.

“We’re absolutely worried about HBM supply,” Song said. “But we think that we have secured our supply for what we’re planning to build out.”

Memory is typically a cyclical business with chipmakers securing the commodity from suppliers like Samsung, SK Hynix and Micron with short-term contracts.

Song wouldn’t comment on whether the company has signed longer-term contracts with memory vendors to protect against the shortage, but said that Meta has a “diversified” approach to its supply chain and silicon strategy.

In recent weeks, Meta inked deals to fill its data centers with millions of Nvidia GPUs and up to 6 gigawatts of AMD GPUs over multiple years.

“The workloads are changing so quickly that we want to make sure that we have options,” Song said, referring to the chip deals.

Meta’s new in-house chips are manufactured by Taiwan Semi, which primarily operates out of Taiwan and has a large, new chip fabrication campus in Arizona.

Meta declined to comment on whether the chips will be made in Arizona.

The majority of Meta’s “substantial team” of hundreds of engineers who worked on the silicon are based in the U.S., Song said. Of Meta’s 30 total operational and planned data centers, 26 of them are in the U.S.

WATCH: Breaking down AI chips, from Nvidia GPUs to ASICs by Google and Amazon.

<