A closer look at Nvidia’s $20 billion bet on tech for a new AI chip

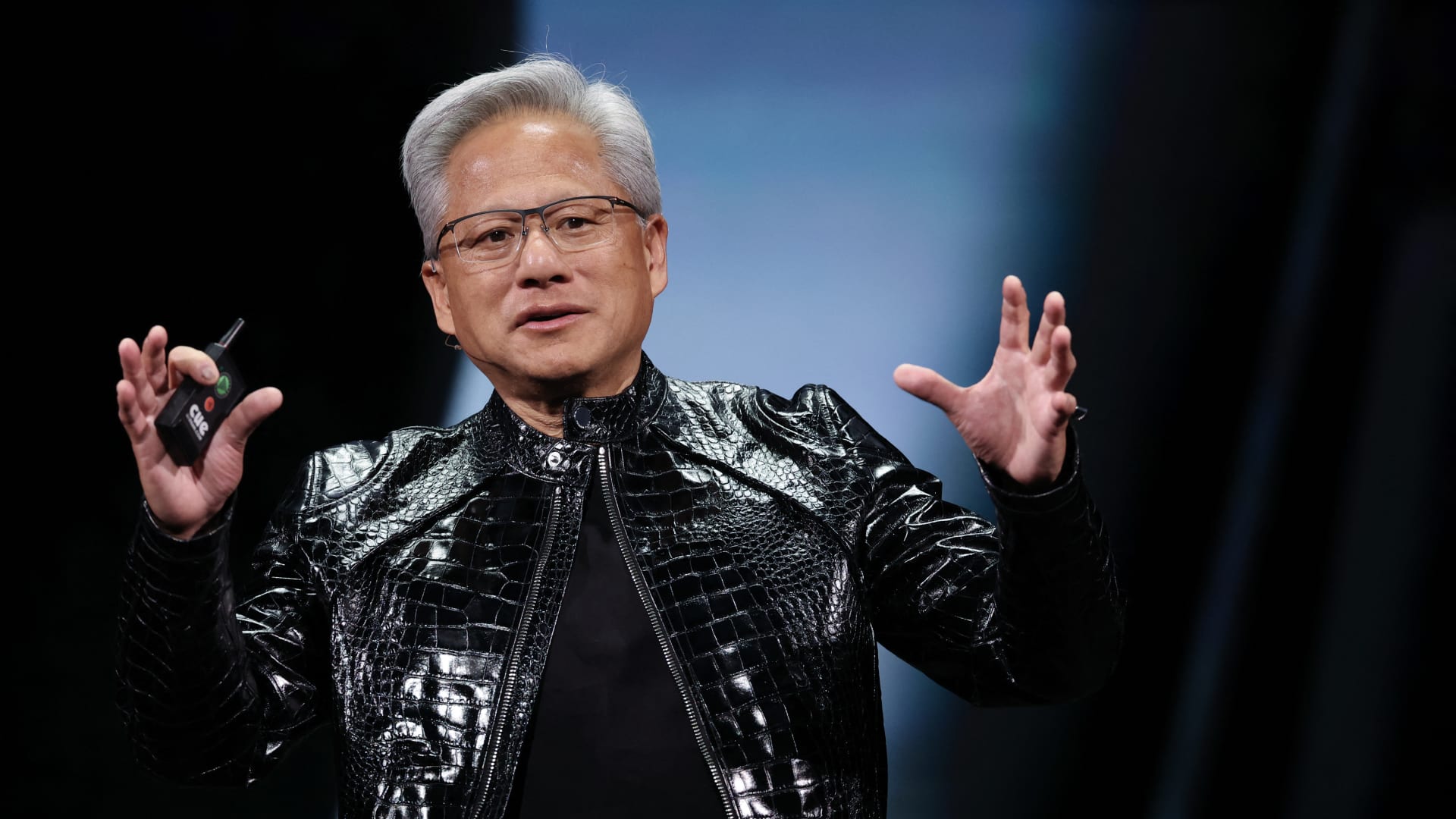

On the day before Christmas, when few stocks were stirring, a pricey and pivotal transaction jolted the AI computing race: Nvidia was spending a reported $20 billion to license technology from chip startup Groq and hire key employees, including its CEO, who previously helped Google create what’s become the leading alternative to Nvidia’s AI processors. In the months since, Nvidia’s offensive move has arguably flown under the radar, considering its competitive ramifications in the artificial intelligence gold rush. Perhaps it was lost in the Christmastime shuffle, or in the torrent of other deals and investments that have been flowing from the world’s most valuable company over the past year. That should change next week, when Nvidia holds its annual GTC event, called the GPU Technology Conference in its early days, in San Jose, California. The four-day gathering is a big deal in AI. It takes place at the San Jose McEnery Convention Center, with Monday’s keynote address from Nvidia CEO Jensen Huang held at the nearby SAP Center, where the NHL’s San Jose Sharks play — a venue befitting Jensen’s leather jacket-wearing, rock star-like status. Throughout the week, Nvidia plans to share at least some of its vision for incorporating Groq’s chip technology into its already-dominant AI computing ecosystem. “I’ve got some great ideas that I’d like to share with you at GTC,” Jensen said on the chipmaker’s late February earnings call. Those ideas figure to be among the notable developments at a conference that’s been dubbed the “Super Bowl of AI.” Nvidia is also expected to update us on its roadmap for its bread-and-butter graphics processing units (GPUs), including its next-generation Vera Rubin family. The main reason for the Groq intrigue: Nvidia is likely to harness Groq’s technology to build a brand-new chip targeting the daily use of AI models, a process known as inference, according to Wall Steet analysts. Inference is becoming a larger and more competitive part of the AI computing picture. Plus, it’s the source of revenue for Nvidia’s data center customers. Nvidia’s GPUs are the clear-cut performance leader in the training stage of AI computing, where the models are fed vast amounts of data to be prepared for real-world usage. Nvidia’s dominance in training fueled its meteoric ascent in recent years. The inference market, however, is much more crowded, as AI adoption goes mainstream and customers seek out cost-effective ways to meet the booming demand. Companies are essentially trying to get their hands on whatever kind of chips they can. Advanced Micro Devices , the distant No. 2 maker of GPUs, is finding some traction in inference, recently signing up Meta Platforms as a customer in a splashy partnership announcement . Meanwhile, the custom chips initiatives at large tech companies, including Meta, are generally seen as targeting the inference market. To be sure, Google’s in-house Tensor Processing Units (TPUs) are formidable challengers in both training and inference, and the newfound success of Google’s Gemini chatbot — built on TPUs — has elevated their reputation as Nvidia’s biggest threat. Google co-designs TPUs with Broadcom . Amazon has also touted its in-house Trainium chip’s capabilities in both tasks. Anthropic, the AI startup behind the Claude model, uses Trainium — though, in a reflection of the hunt for any-and-all-kinds of computing, Anthropic is also using TPUs and inked a deal with Nvidia in the fall. Another competitor to know: Cerebras, an AI startup preparing for an initial public offering. For the first time, Oracle co-CEO Clay Magouyrk earlier this week name-dropped Cerebras on its earnings call . Nvidia is no slouch in inference. While perhaps a bit outdated, Nvidia in 2024 disclosed that about 40% of its revenue was from inference. At last year’s GTC, Jensen told analysts that “the vast majority of the world’s inference is on Nvidia today.” And, on Nvidia’s most recent earnings call in late February, finance chief Colette Kress highlighted that industry publication SemiAnalysis recently “declared Nvidia inference king,” noting that its current generation Grace Blackwell GPUs offer massive performance improvements over its predecessor Hopper. Where Groq fits Nvidia evidently saw an opportunity to improve what it brings to the table on inference, otherwise it wouldn’t have shelled out a reported $20 billion for Groq’s technology and talent. Nvidia didn’t outright acquire the entire Groq company, perhaps to avoid antitrust scrutiny. The licensing deal is billed as non-exclusive, and Groq continues to operate an inference cloud service running on its specialized chips (also, in case there was any confusion, the company has no ties to the other Grok, Elon Musk’s AI chatbot). Some important people jumped to Nvidia in the deal, though. The most notable addition is Groq’s founder and now-ex CEO, Jonathan Ross. Before starting Groq in 2016, Ross was part of the Google team that developed the original TPU. Ross now holds the title of chief software architect at Nvidia. Groq developed and brought to market what it called an inference-focused LPU, short for Language Processing Units. In various podcast interviews over the years, Ross has made it clear that Groq didn’t bother trying to compete with Nvidia on training. Instead, he has said, Groq saw inference computing as the place where the startup could innovate and carve out a lane. So, Groq set out to develop a chip for running AI models that prioritizes speed and efficiency at a lower cost. A main reason why Nvidia’s GPUs are so good at training AI models is their ability to perform a massive amount of calculations at the same time, often called parallel processing. Keeping it simple, AI models work to identify patterns within a mountain of training data, and that requires doing a lot of math simultaneously — hence why a GPU is superior for AI training to a traditional computer processor (CPU), which executes tasks sequentially rather than in parallel. Now, another important trait of GPUs is their flexibility, driven in large part by Nvidia’s CUDA software program. Jensen has said that CUDA — short for compute unified device architecture — enables GPUs to perform across all different types of workloads, including inference. When an AI model is deployed for inference and receives a user’s prompt, the model basically refers back to all those learned patterns to determine what the most appropriate response should be, piece by piece (or token by token, in AI parlance). It is making the decision based on the probabilities in its training data. But fundamentally, there is a difference in training and inference computing, and what attributes of a chip are most desirable for each varies. Groq designed its chips to be really good at inference, and in particular, real-time tasks where speed is of the utmost importance. Groq’s LPUs use a type of short-term memory, known as SRAM, that is located directly on the chip’s engine, a driving force behind its speediness. GPUs, on the other hand, use a type of short-term memory called high-bandwidth memory or HBM, which is located right next to the GPU’s engine, not directly on it. The AI boom has created a supply crunch for HBM and set memory prices soaring. “GPUs are really great at training models. When somebody wants to train a model, I’m just like, ‘Just use GPUs. Don’t talk to us,'” Ross said in a podcast interview with wealth advisory Lumida in late 2023 . “But the big difference is, when you’re running one of these models — not training them, running them after they’ve already been made — you can’t produce the 100th word until you’ve produced the 99th,” he added. “So, there’s a sequential component to them that you just simply can’t get out of a GPU. … It’s how quickly you complete the computation, not just how many computations you can complete in parallel. And we do the computations much faster.” However, Ross has said he believes Nvidia’s bread-and-butter GPUs and Groq’s technology can complement each other. He made that clear in a separate interview on The Capital Markets podcast , dated February 2025, still many months before he left Groq for Nvidia. “We’re actually so crazy fast compared to GPUs that we’ve actually experimented a little bit with taking some portions of the model and running it on our LPUs and letting the rest run on GPU. And it actually speeds up and makes the GPU more economical. So, since people already have a bunch of GPUs they’ve deployed, one use case we’ve contemplated is selling some of our LPUs to, sort of, nitro boost those GPUs.” That comment really jumped out, as we came across this year-old interview, searching for additional insight into Groq and Ross. Hearing Ross say that long before he joined Nvidia made us even more intrigued to hear Jensen’s vision next week. There are a lot of possibilities for Groq-infused Nvidia hardware. Indeed, as AI advances, it makes sense that Nvidia would branch out into more specialized chips. History suggests that the more advanced a certain technology gets, the more specialization there is. Back on Nvidia’s February earnings call, Jensen indicated that he’s looking at Groq in a similar vein to Mellanox, the networking equipment provider that Nvidia acquired six years ago . “What we’ll do is we’ll extend our architecture with Groq as an accelerator in very much the ways that we extended Nvidia’s architecture with Mellanox,” Jensen said. That acquisition has aged like fine wine because Nvidia’s networking prowess is a crucial ingredient to its success in the AI boom, transforming it into a one-stop shop for AI computing rather than a simple chip designer. In its fiscal 2026 fourth quarter alone, Nvidia’s networking business generated around $11 billion in revenue — roughly the same as AMD’s overall revenue. Nvidia’s better-than-expected companywide revenue in Q4 surged 73% year over year to $68.13 billion. Less than three years ago, Nvidia’s networking revenue was pacing for roughly $10 billion for an entire 12-month period . Now, it’s $11 billion in just three months, exploding alongside its GPU revenue, too. Investors can only hope the Groq transaction ends up being anywhere near as successful as Mellanox. The journey to finding out starts next week. (Jim Cramer’s Charitable Trust is long NVDA, GOOGL, META, AVGO and AMZN. See here for a full list of the stocks.) As a subscriber to the CNBC Investing Club with Jim Cramer, you will receive a trade alert before Jim makes a trade. Jim waits 45 minutes after sending a trade alert before buying or selling a stock in his charitable trust’s portfolio. If Jim has talked about a stock on CNBC TV, he waits 72 hours after issuing the trade alert before executing the trade. THE ABOVE INVESTING CLUB INFORMATION IS SUBJECT TO OUR TERMS AND CONDITIONS AND PRIVACY POLICY , TOGETHER WITH OUR DISCLAIMER . NO FIDUCIARY OBLIGATION OR DUTY EXISTS, OR IS CREATED, BY VIRTUE OF YOUR RECEIPT OF ANY INFORMATION PROVIDED IN CONNECTION WITH THE INVESTING CLUB. NO SPECIFIC OUTCOME OR PROFIT IS GUARANTEED.

<